AI Infrastructure(AI Infrastructure)

AI infrastructure encompasses the physical and logical foundations required to train, run, and serve AI models: AI accelerators (GPUs, TPUs, custom ASICs), datacenters (cooling, power, networking), storage, and software stacks (CUDA, XLA, TensorRT, etc.).

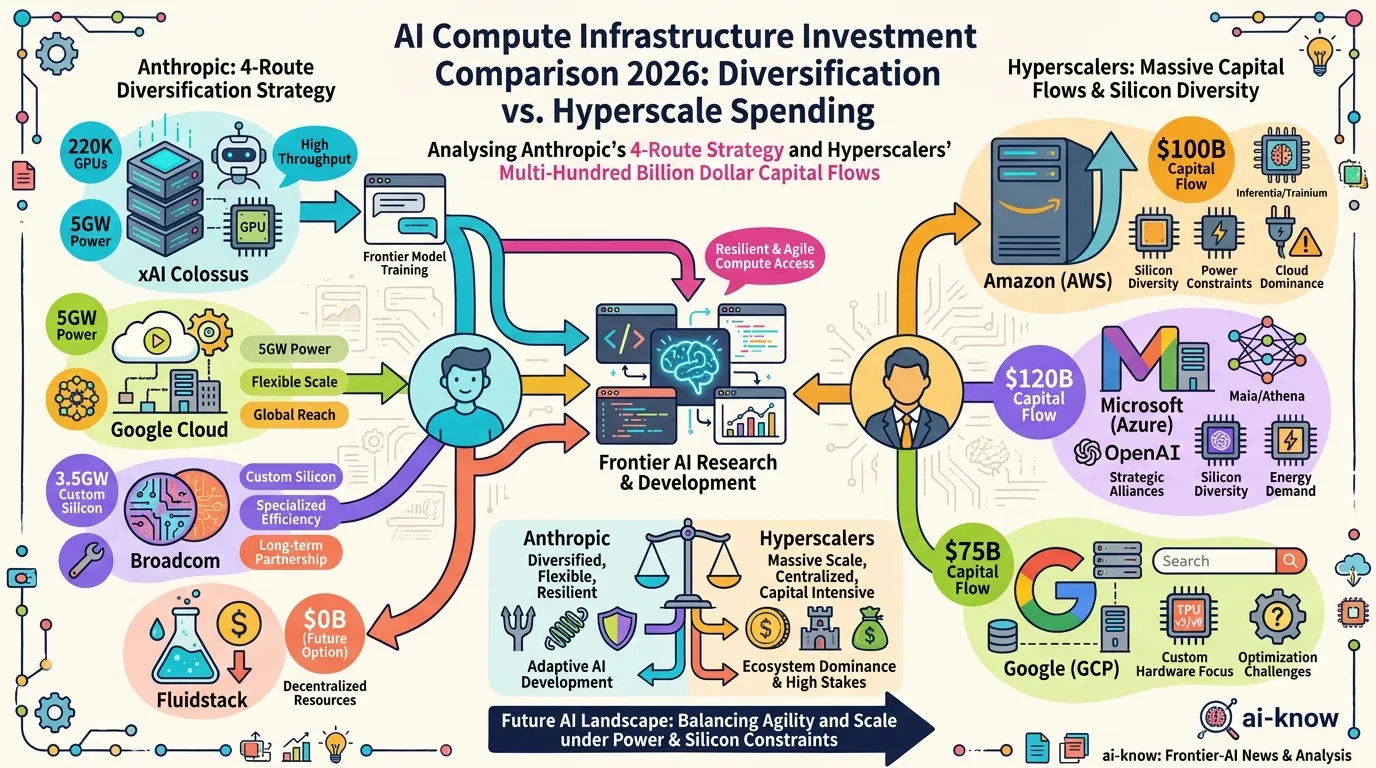

In 2026, the dominant dynamics are capital expenditure scale ($690B+ annual hyperscaler investment), power procurement bottlenecks (Microsoft’s $80B backlog), and the shift from NVIDIA-only to multi-silicon strategies (Google TPU, AWS Trainium, Broadcom custom chips). Control of AI infrastructure is increasingly treated as equivalent to control of AI capability itself.

※ Auto-generated stub by ai-theme-roundup. Needs further completion.