AI Compute Infrastructure 2026: Anthropic's $50B Pledge, the xAI Colossus Deal, and Google-Broadcom's 8.5GW Bet

Hyperscalers are committing $690B in a single year while power constraints emerge as the hard ceiling — a full map of the AI infrastructure arms race

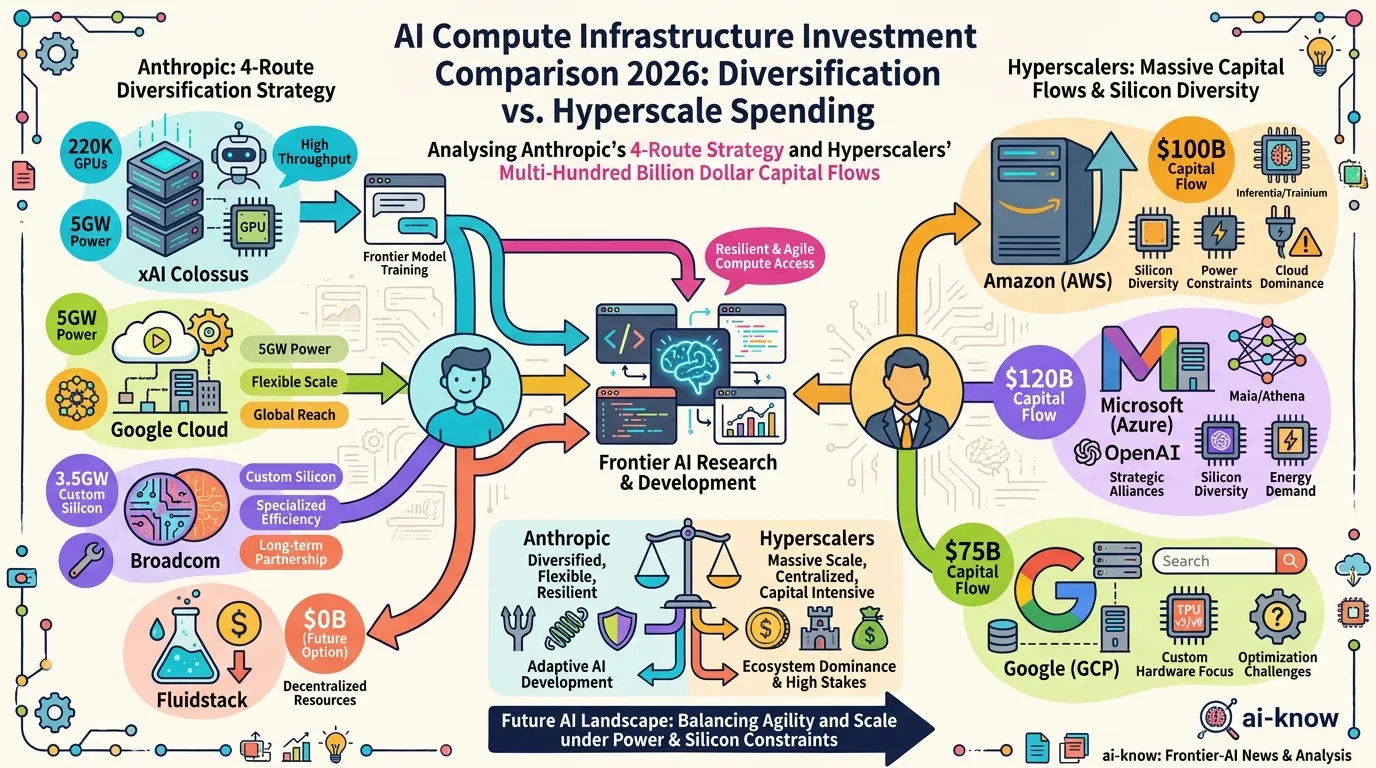

The scale of 2026’s AI infrastructure investment defies easy comprehension. The five largest US hyperscalers — Amazon, Microsoft, Alphabet, Meta, and Oracle — have collectively committed $660–690 billion in capital expenditure for 2026 alone, with roughly 75% targeting AI infrastructure. This is not cloud expansion; it is a race for computational sovereignty.

Yet the most strategically interesting player in this race is not the largest spender. Anthropic, with its multi-route approach to compute procurement, has assembled a portfolio of datacenter partnerships that no other AI model company has attempted. This article maps the major players and their infrastructure bets — and what it means for AI developers and enterprise buyers.

Key Players

Anthropic: Four-Route Diversified Strategy

Anthropic is simultaneously securing compute through four independent channels — a model that reflects both the urgency of its compute needs and an explicit hedge against single-vendor dependency.

1. xAI Colossus 1 lease (May 2026): Anthropic signed a deal to use the entire compute capacity of xAI’s Colossus 1 datacenter in Memphis, Tennessee — the former Electrolux factory that xAI converted into a supercomputer hub in 2024. This gives Anthropic access to 220,000+ NVIDIA GPUs and 300 megawatts of power within a month of the announcement. Elon Musk characterized the deal: “No one set off my evil detector.” xAI is retaining the larger Colossus 2 for its own work.

2. US domestic datacenters with Fluidstack ($50B, November 2025–): Anthropic committed $50 billion to American AI infrastructure, starting with Fluidstack-operated facilities in Texas and New York. Sites are coming online throughout 2026, creating roughly 800 permanent and 2,400 construction jobs. The program aligns with the Trump administration’s AI Action Plan for domestic technology leadership.

3. Google Cloud 5-year deal + $40B Google investment: Google invested up to $40 billion in Anthropic in cash and compute credits. Simultaneously, Anthropic secured access to 5 gigawatts of Google Cloud capacity over five years at a committed price of approximately $200 billion total — and access to Google TPU chips through the Broadcom partnership below.

4. Broadcom custom silicon (from 2027): Broadcom is developing custom tensor processing units for both Anthropic and Google, delivering 3.5 gigawatts of additional compute capacity beginning in 2027. Analyst estimates put Broadcom’s revenue from Anthropic at $21 billion in 2026 and $42 billion in 2027 — a single partnership reshaping Broadcom’s entire business profile.

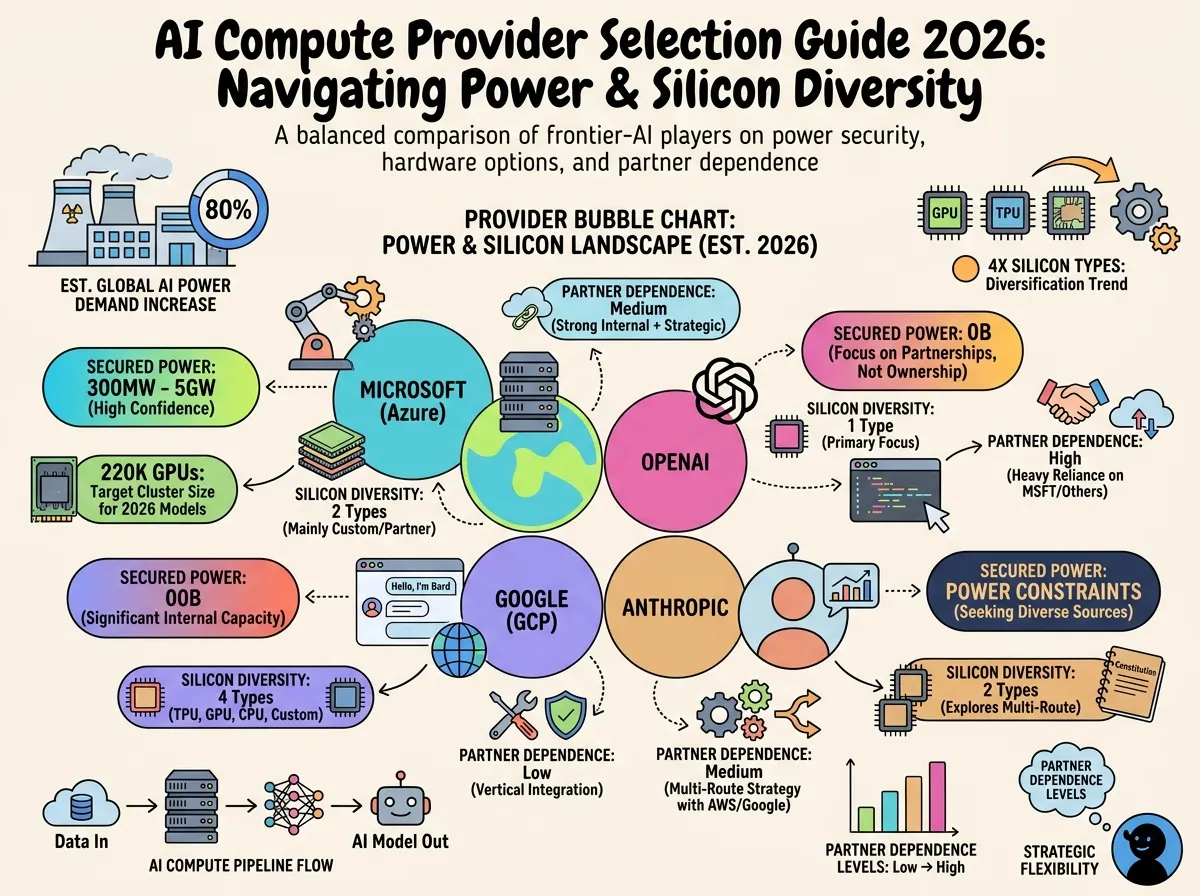

The result: Anthropic trains and runs Claude on AWS Trainium, Google TPUs, NVIDIA GPUs, and soon Broadcom custom ASICs — four distinct silicon types, giving it flexibility no single-vendor strategy can match.

OpenAI: $50B in Single-Year Compute Spending

OpenAI projects spending $50 billion on compute in 2026 alone — roughly double its estimated 2026 revenue. The company has secured over $1.4 trillion in long-term infrastructure commitments through deals with NVIDIA, Broadcom, Oracle, and major cloud providers. The gap between revenue and compute cost signals that OpenAI is betting that revenue growth will catch up with, rather than constrain, its infrastructure build-out.

Microsoft: $120B+ with an $80B Power Problem

Microsoft is on track for $120 billion or more in capex in 2026. However, the company has disclosed an $80 billion backlog of Azure orders that cannot be fulfilled due to power constraints — a concrete illustration of the bottleneck that will define 2026’s second half. Cooling, grid access, and permitting timelines are limiting the speed at which new capacity can come online.

Alphabet/Google: $175B Across Own and Partner Infrastructure

Google DeepMind‘s parent company Alphabet has budgeted $175 billion in 2026 capex — deployed across its own research and Cloud infrastructure, plus the external supply to Anthropic. The Google Cloud / Broadcom / Anthropic triangle represents a notable externalization of Google’s chip and datacenter ecosystem.

Comparison Table

| Company | 2026 Capex | Primary Silicon | Strategic Differentiator |

|---|---|---|---|

| Amazon / AWS | $200B | NVIDIA GPU + Trainium | Largest raw scale; supplies Anthropic Trainium |

| Alphabet / Google | $175B | Google TPU + GPU | Supplies Anthropic 5GW; dual internal+external model |

| Microsoft / Azure | $120B+ | NVIDIA GPU | $80B power-constrained backlog |

| Meta | $115–135B | NVIDIA GPU | Internal-only (Llama training, research) |

| Oracle | $50B | NVIDIA GPU | Primary OpenAI infrastructure partner |

| OpenAI | Compute $50B | NVIDIA GPU + custom | Revenue-to-spend gap: 2× |

| Anthropic | $250B+ (5-yr plan) | GPU + TPU + ASIC (4 routes) | Only model company with multi-silicon diversification |

What This Means for AI Developers and Buyers

For Claude users and enterprise customers: the xAI Colossus 1 deal’s immediate effect was a significant increase in Claude usage limits, announced simultaneously with the compute partnership. Anthropic’s compute diversification means Claude availability is less likely to be constrained by any single partner’s capacity — a meaningful reliability advantage.

For cloud selection: Anthropic’s multi-cloud silicon strategy means Claude APIs run well on AWS, Google Cloud, and Azure. Organizations concerned about lock-in can route Claude workloads through whichever hyperscaler they prefer, without sacrificing model access.

For infrastructure planners: the power constraint reality — crystallized by Microsoft’s $80B fulfillment gap — means that Compute Scaling is no longer just about capital. New datacenter timelines are increasingly set by electrical grid connection queues and permitting processes, not construction speed. Site selection around power-dense regions (Texas ERCOT, nuclear-adjacent zones, data-center-friendly states) is becoming a core AI strategy variable.

What’s Ahead in 2026

Custom silicon wins share: The Anthropic-Broadcom deal, Google’s internal TPU program, and AWS Trainium all represent concrete moves away from pure NVIDIA dependency. By 2027, when Broadcom’s Anthropic-specific chips come online, the compute landscape will look materially different from today’s GPU monoculture.

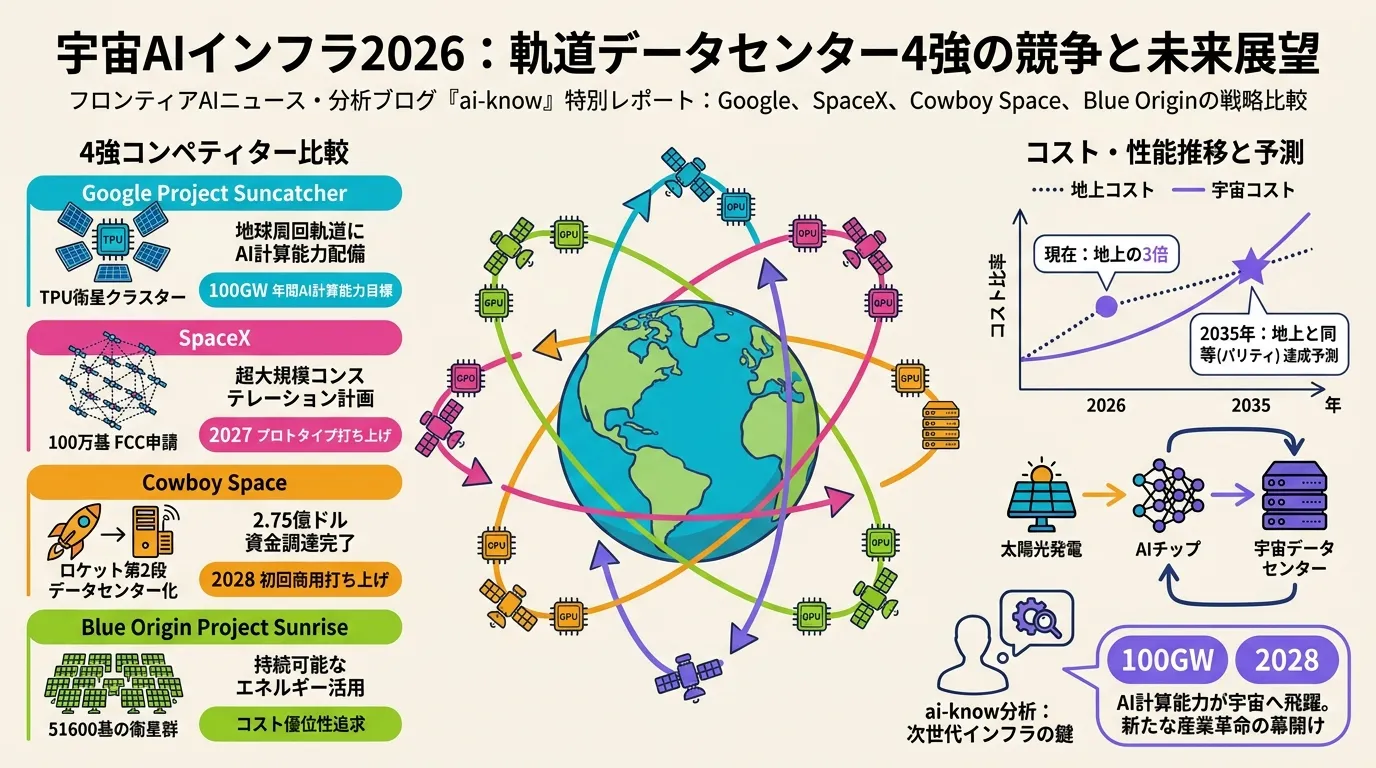

Orbital compute as serious option: The Anthropic-SpaceX agreement included expressed interest in “multiple gigawatts of compute in space.” Orbital data centers would address the two hardest terrestrial constraints — land and cooling — at the cost of launch complexity. The timeline is measured in years, but the strategic intent is real.

Power as the hard ceiling: Between 2026 and 2028, the question of who can procure and power datacenters faster than rivals will determine relative AI capability as much as algorithm research. Sovereign AI considerations — domestic manufacturing, allied-country sites, security clearance requirements — are now embedded in every hyperscaler’s site selection calculus.

Sources: Anthropic Invests $50B in American AI Infrastructure (Anthropic, 2025), Google & Broadcom Compute Partnership (Anthropic, 2026), Higher Usage Limits + SpaceX Deal (Anthropic, 2026), xAI Compute Partnership Announcement (xAI, 2026), Anthropic-Google-Broadcom TPU Deal (TechCrunch, 2026), Anthropic to Rent All Colossus 1 Compute (CNBC, 2026), AI CapEx 2026: The $690B Infrastructure Sprint (Futurum, 2026), Big Tech to Spend $700B on AI (Fortune, 2026), Broadcom $200B Google Cloud Deal for Anthropic (Bloomberg/BigGo, 2026)

Related Articles

Space-Based AI Infrastructure 2026: Comparing Google, SpaceX, Cowboy Space, and Blue Origin in the Race to Orbit

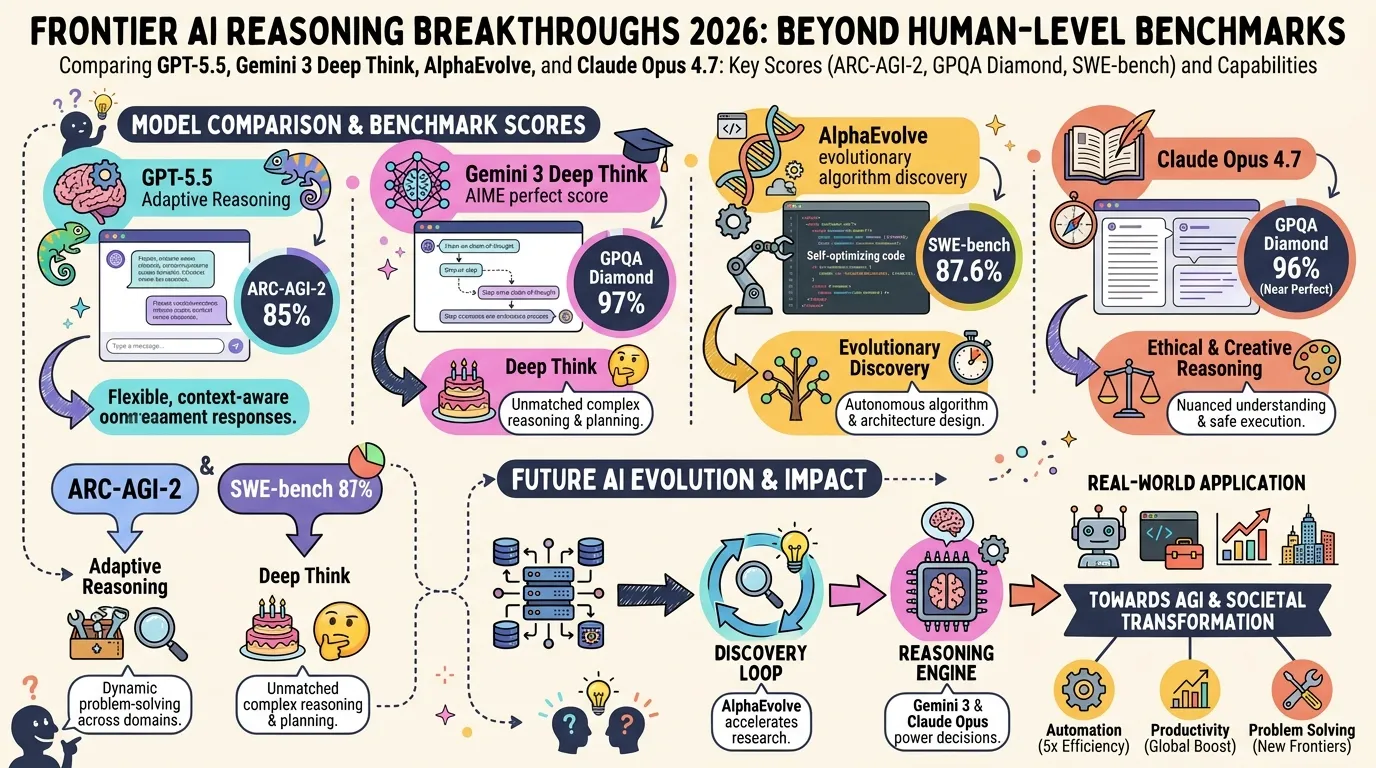

State of LLM Reasoning 2026: Comparing GPT-5.5, Gemini 3 Deep Think, and AlphaEvolve

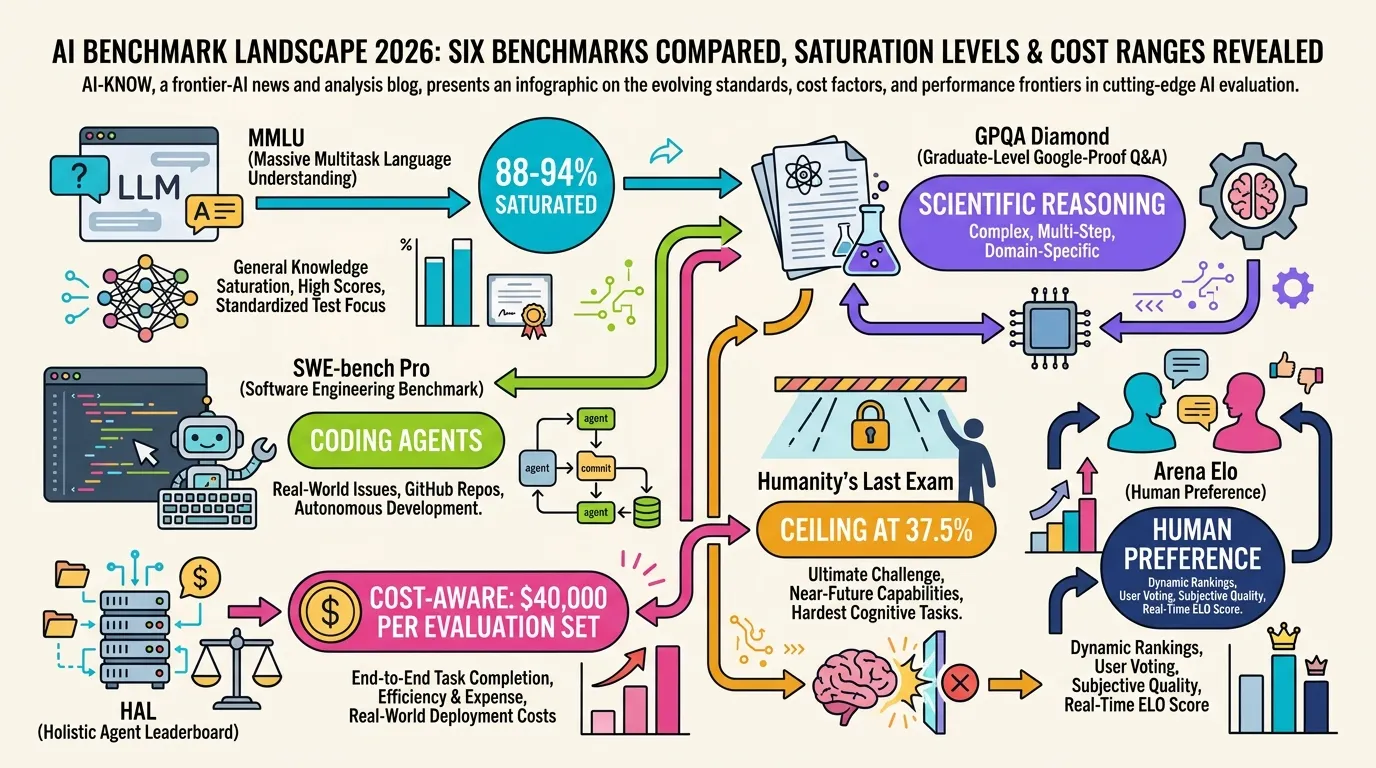

AI Evaluation in 2026: Beyond MMLU — A Practical Guide from SWE-bench Pro to HLE

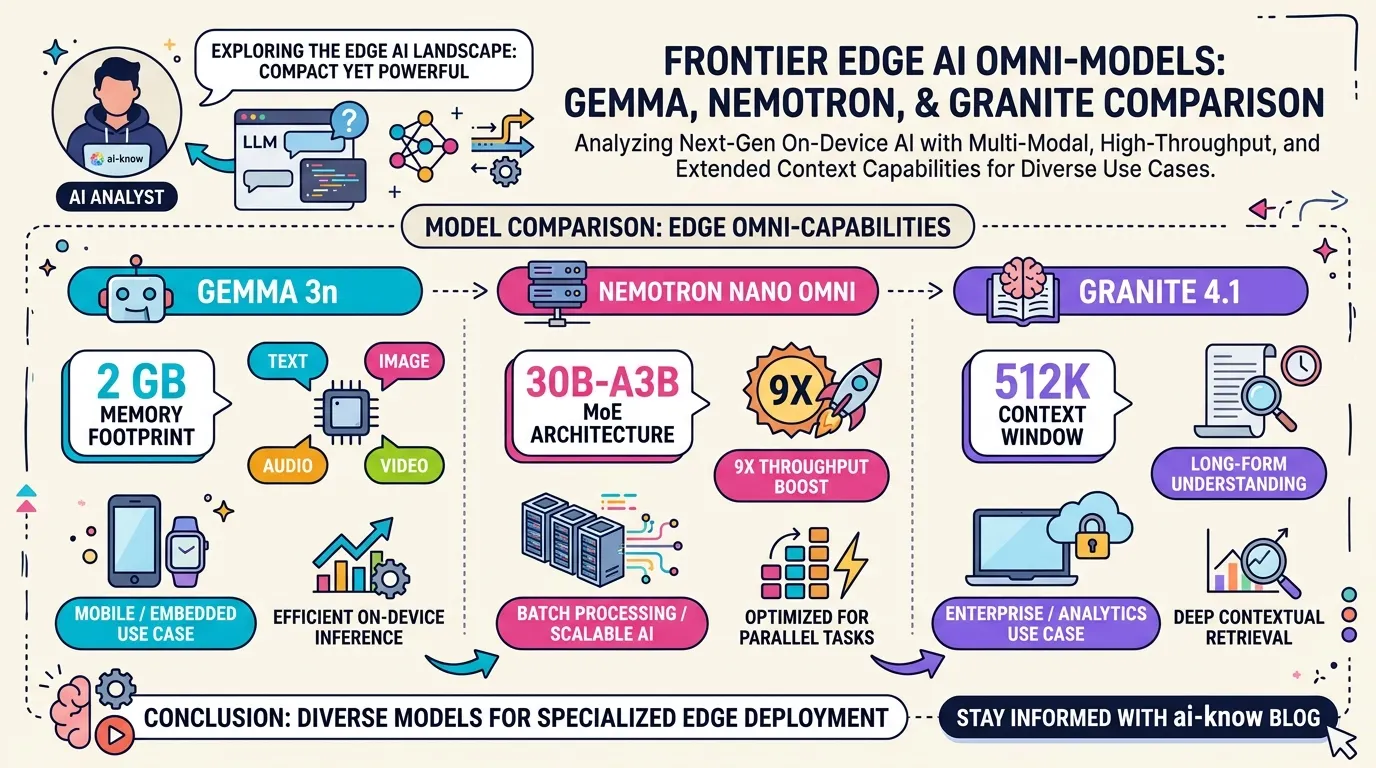

Edge Omni Models in 2026: Gemma 3n, Nemotron Nano Omni, and Granite 4.1 Compared

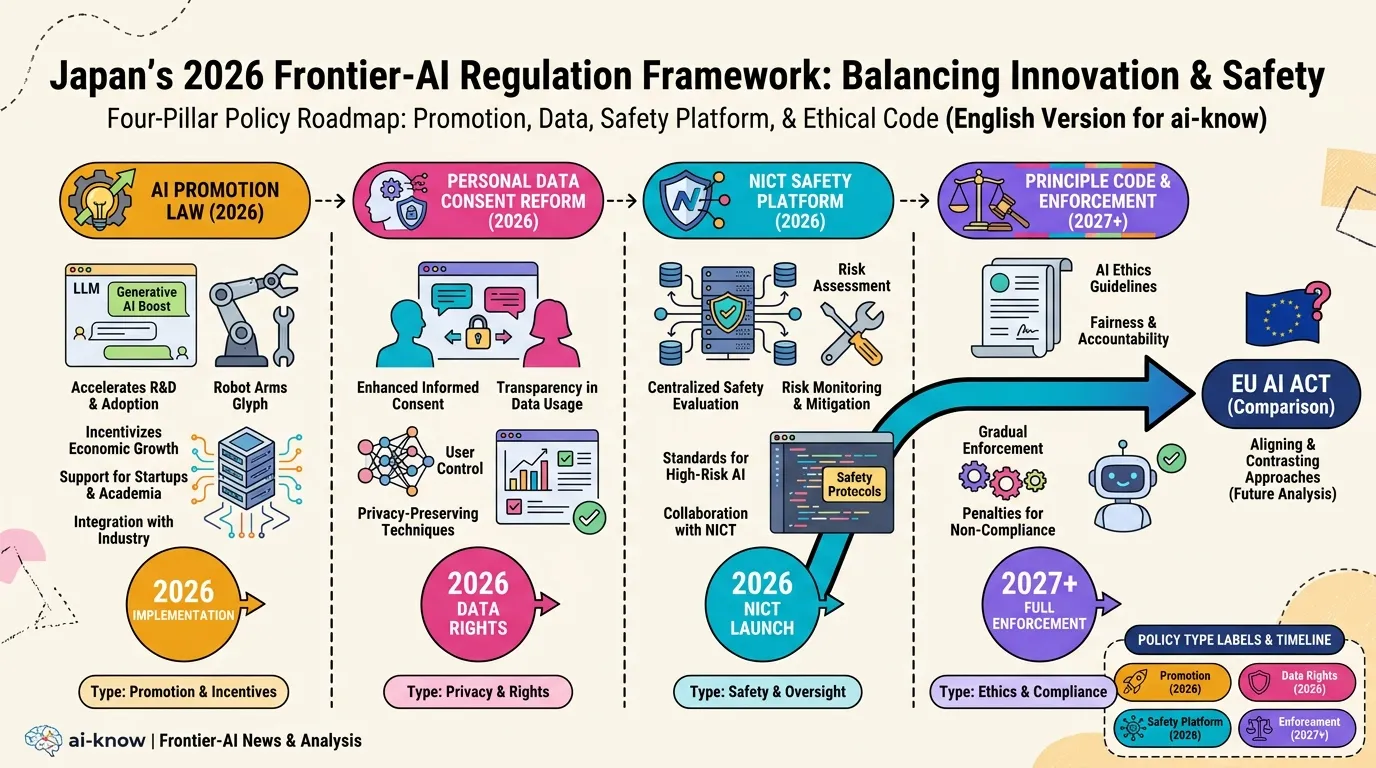

Japan AI Policy 2026: Promotion Law, Data Consent Reform, and the NICT Safety Platform