The State of AI Coding Agents in 2026: Architectural Convergence and the Context Engineering Era

Seven tools have converged on the same architecture — and the competitive axis has shifted from model capability to context management

The “Copilot vs. Cursor” frame of 2024 is over. The AI Coding Agents market in 2026 is defined by two mega-trends: architectural convergence and role specialization. The seven leading tools — Claude Code, Codex, Copilot, Gemini, Cursor, Devin, and Windsurf — have strikingly similar internal structures despite their different surface UIs. And at that convergence point, the competitive axis has shifted from “which model powers it” to “how well it manages context.”

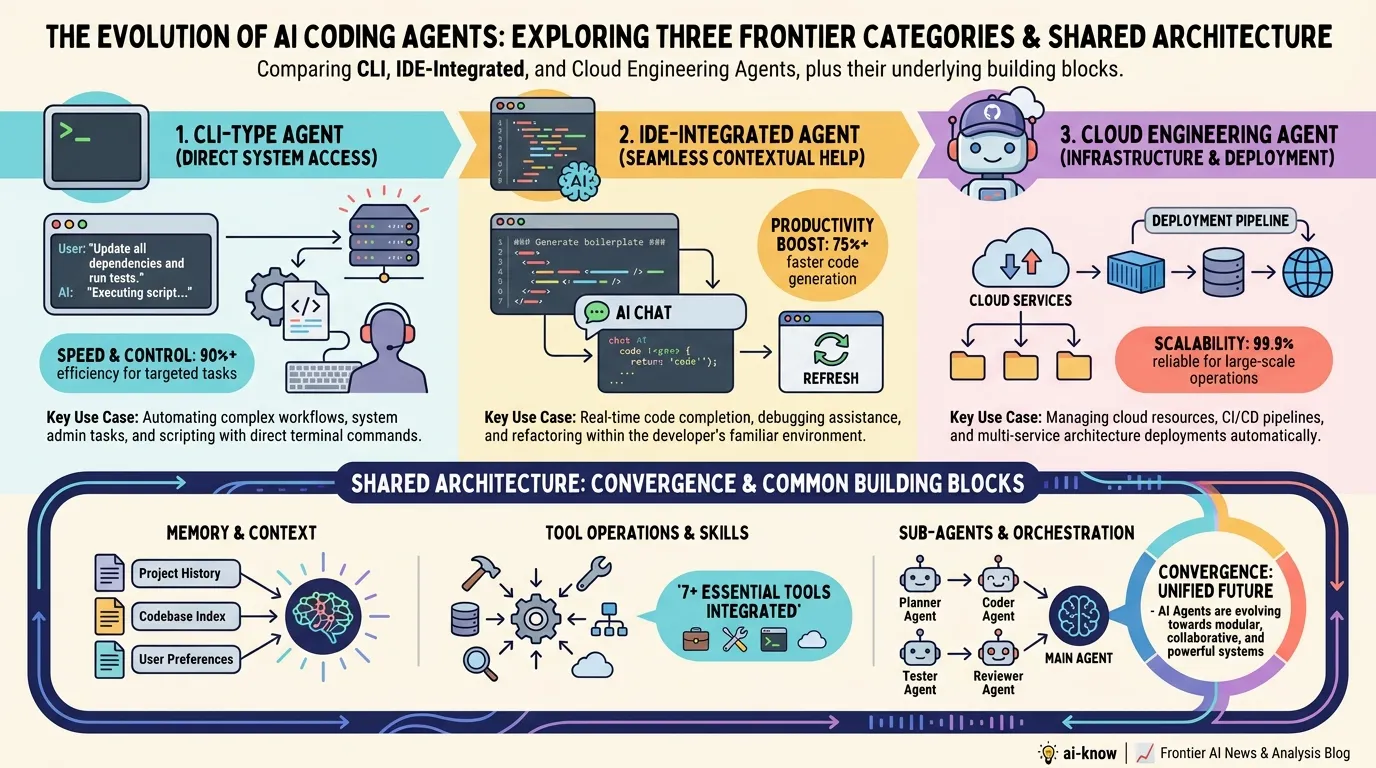

Three Tiers, Same Foundation

The market has crystallized into three clear categories:

CLI agents (led by Claude Code from Anthropic) operate in the terminal, excelling at full-repository reads, file operations, and test execution. They appeal to power users and backend engineers working with large, complex codebases.

IDE-integrated agents (Copilot from Microsoft, Cursor, Windsurf) embed directly in the editor and specialize in multi-file editing and inline suggestions. Low onboarding friction makes them the default choice for individual contributors optimizing their daily coding velocity.

Cloud engineering agents (Devin from Cognition AI, OpenAI‘s Codex) fully automate the loop from GitHub Issue triage to pull request creation. Running asynchronously, they’re designed for teams willing to shift engineers into a reviewer role — presupposing CI/CD integration.

The Four Shared Building Blocks

Despite UI differences, all three tiers now ship the same four core components:

-

Memory files: Persistent per-repository instructions —

CLAUDE.md,cursor-rules.md— that encode project conventions, constraints, and domain knowledge. This is the backbone of Context Engineering. -

Tool access: A structured interface to Git, test runners, linting, and shell execution. The sophistication of tool call handling largely determines an agent’s effective autonomy.

-

Sub-agent delegation / Multi-Agent Orchestration: Breaking large tasks into parallel subtasks assigned to specialized sub-agents. A practical workaround for single-agent context window limits.

-

Long-running execution: Session management for tasks spanning minutes to hours. Native to cloud agents; CLI agents are rapidly catching up.

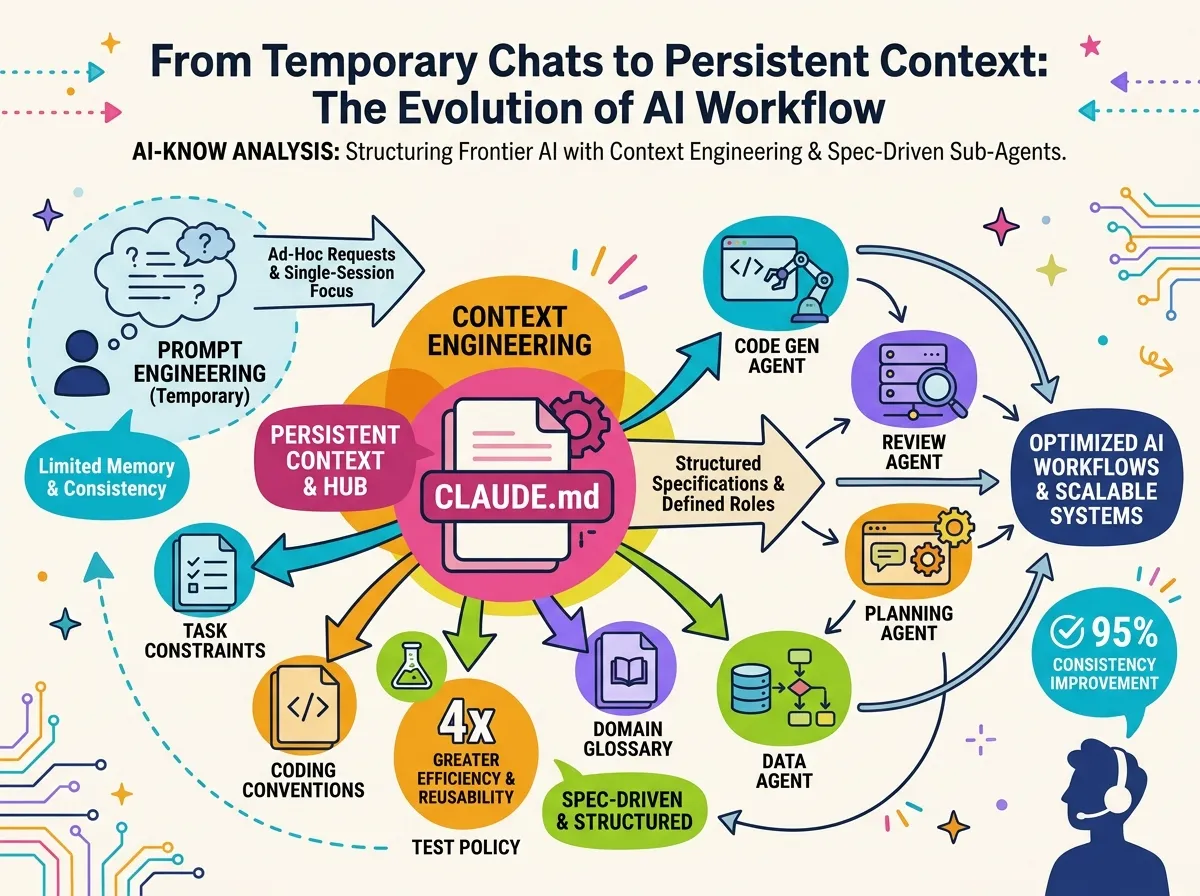

From Prompt Engineering to Context Engineering

The most consequential shift is in what developers actually do.

Prompt engineering was ephemeral — a session-level skill in phrasing good instructions. Context Engineering is an architectural discipline: designing and maintaining a structured set of files (specs, conventions, test policies, domain glossaries) that give any agent persistent, high-quality context.

The skill is no longer “how to write a good prompt.” It’s “how to architect context that makes every prompt better.”

The emergence of CLAUDE.md-style memory files as a de facto OSS standard carries a subtle strategic implication: Anthropic may be positioning itself to own the specification layer of the ecosystem. If this format becomes the canonical interface, Anthropic gains influence regardless of which underlying model runs the agent.

The Commoditization Dynamic

Through 2024, “which model powers it” was the primary differentiator. By 2026, competition has moved to four new axes:

- Memory system design: How context is structured, compressed, and retrieved across long sessions

- Tool ecosystem depth: Quality and breadth of integrations with IDEs, CI/CD, Jira, Slack, and more

- Orchestration sophistication: Task decomposition, delegation pipelines, and result aggregation in Multi-Agent Orchestration workflows

- IDE UX: The micro-interactions that define pair-programming feel

Frontier model commoditization puts downward price pressure across the AI infrastructure stack while simultaneously inflating the value of the context management layer — the opposite of what most observers predicted two years ago.

Three Things to Do Now

1. Start writing specs, not just prompts. Begin a CLAUDE.md in your repo today. Documenting task constraints, forbidden patterns, and coding conventions will improve agent precision immediately.

2. Match the tool category to the job. CLI agents for power-user, large-scope tasks; IDE agents for daily IC velocity; cloud agents for async backlog processing. Mixing all three into a single workflow is where the organizational leverage compounds.

3. Pilot sub-agent delegation. For large refactors or parallel test generation that exceed single-agent context limits, a Multi-Agent Orchestration trial is worth running — even a manual version that orchestrates two Claude Code sessions against the same repo.

As architecture converges, the next differentiator will not be which tool an organization chooses, but how quickly it develops the organizational discipline to design context well.

Related Articles

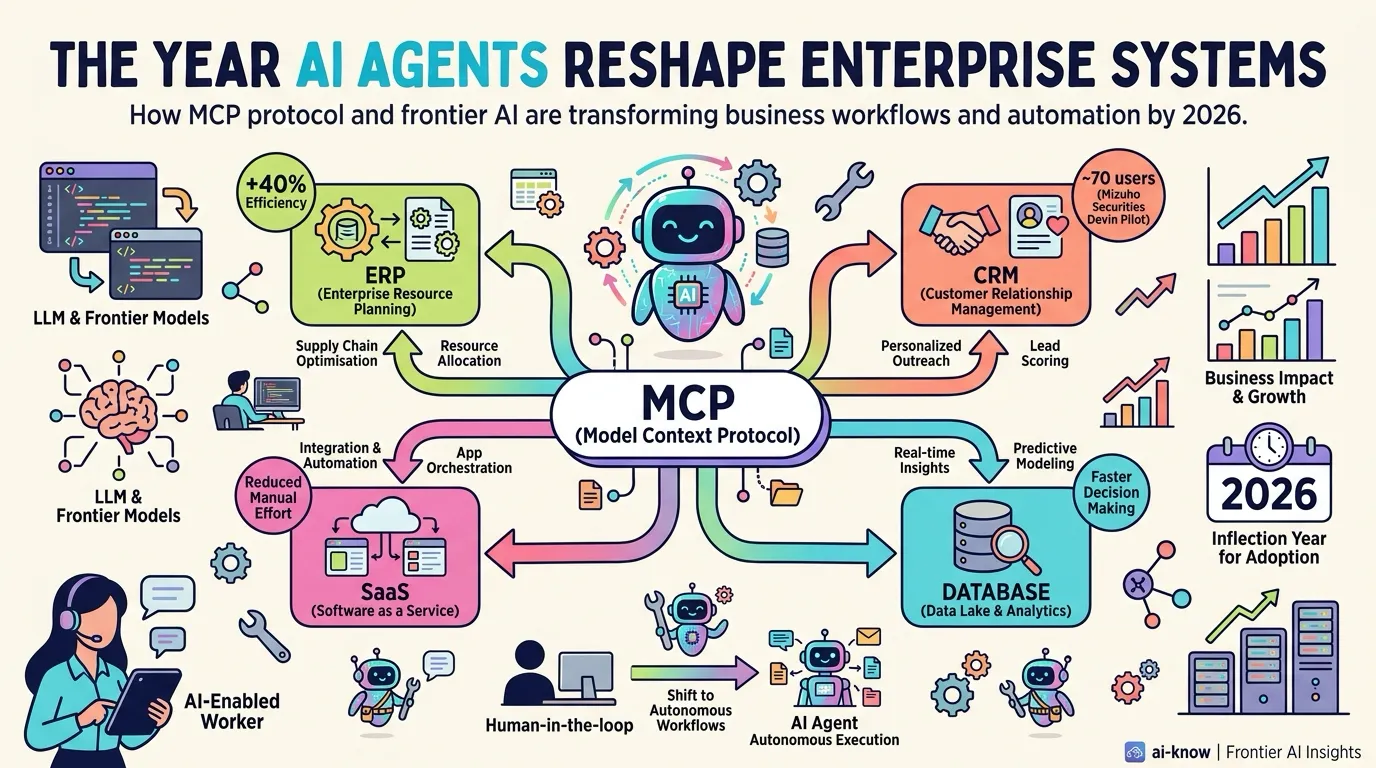

The Year AI Agents Reshape Enterprise Systems — MCP Becomes the Connectivity Standard

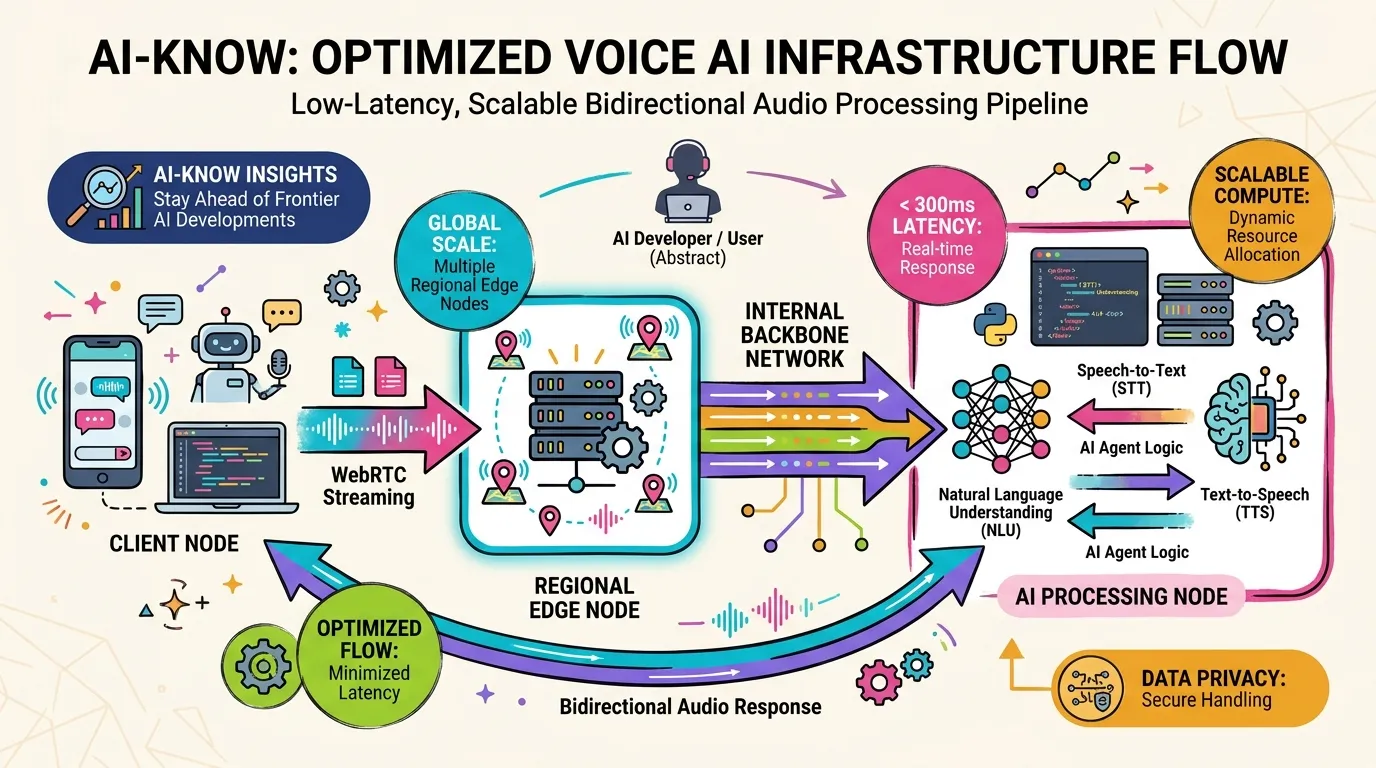

How OpenAI Rebuilt Its WebRTC Stack from Scratch to Deliver Low-Latency Voice AI at Scale

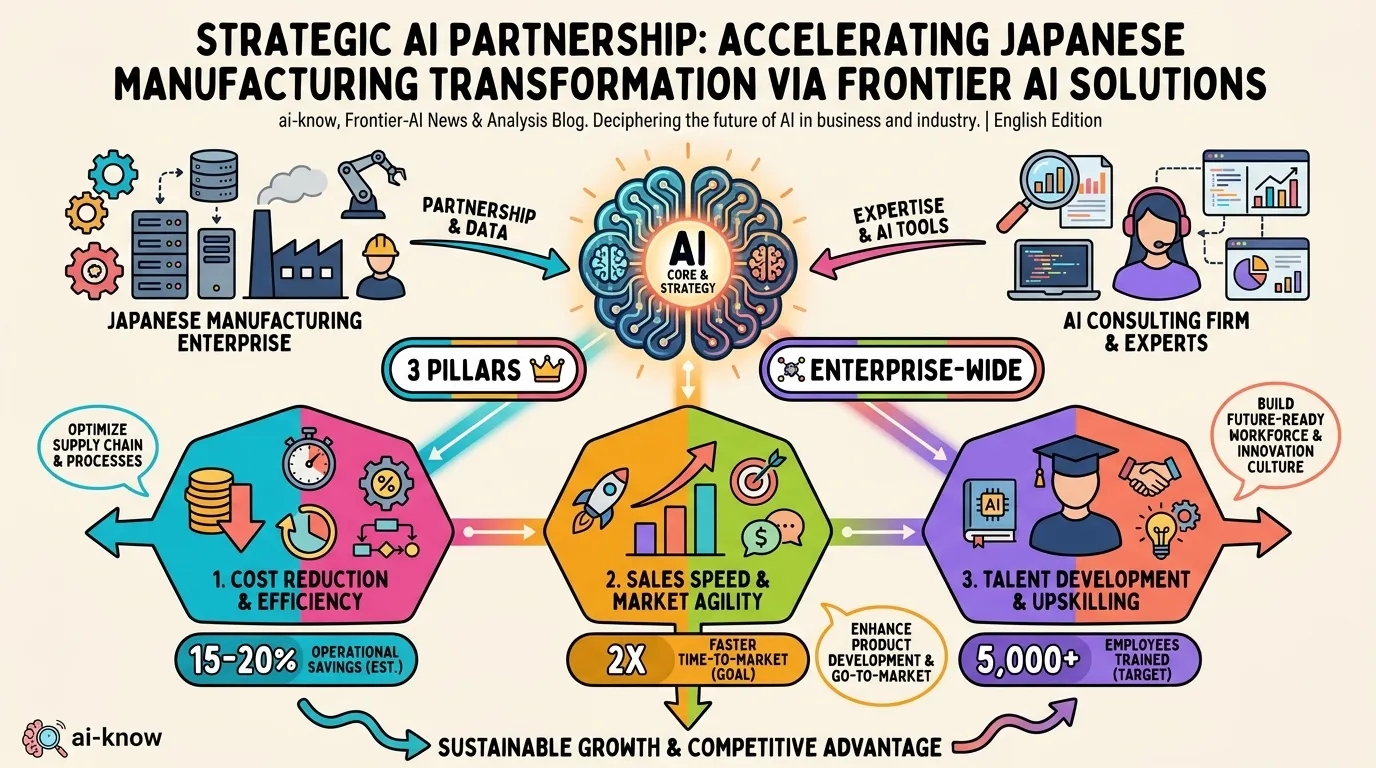

Accenture × NSK: AI-Driven Business Reinvention Moves to Full Scale in Japanese Manufacturing