EU AI Act Amendment Bans Non-Consensual AI-Generated Sexual Images

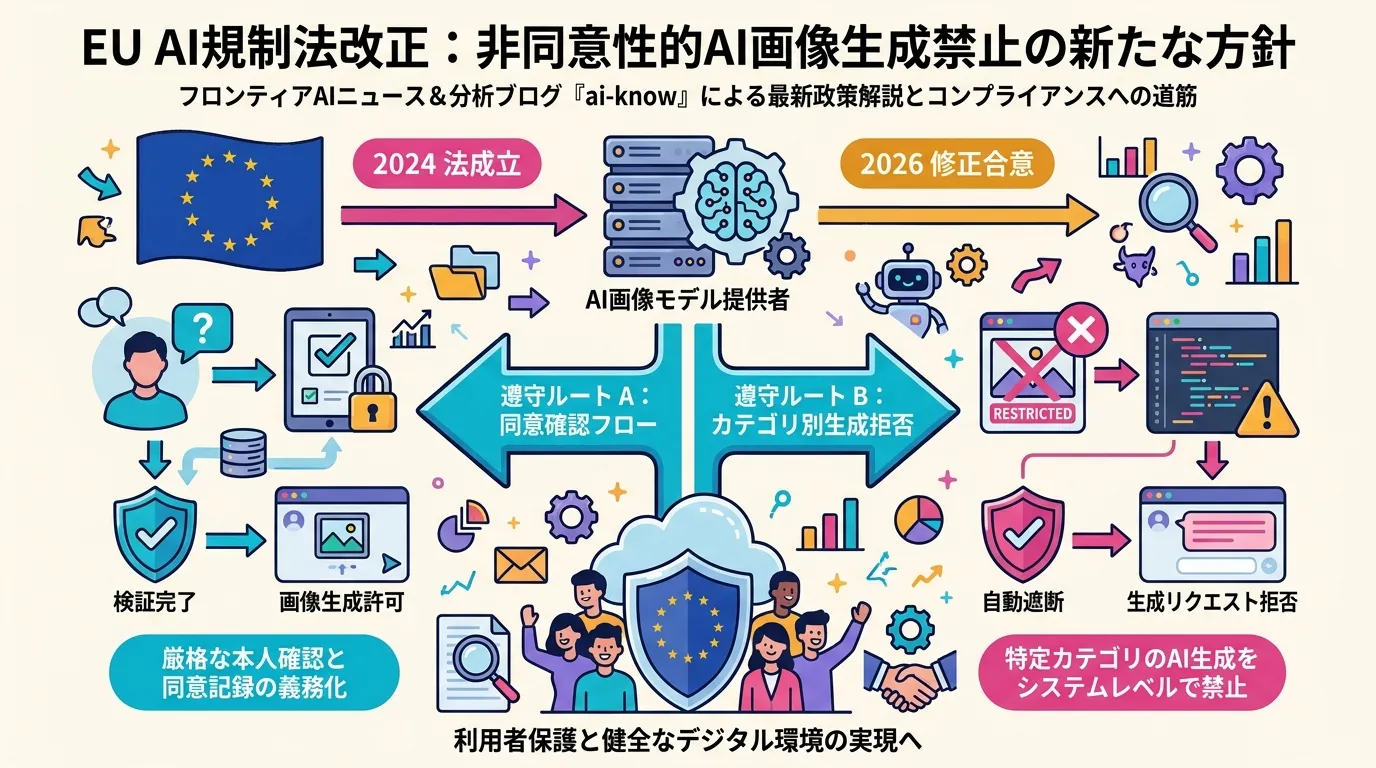

A provisional agreement between the European Parliament and Council adds an explicit prohibition on non-consensual synthetic sexual content — and it could require image model providers to implement consent verification or generation refusal systems

The European Parliament and Council have reached a provisional agreement on an amendment to the EU AI Act that, for the first time in major EU law, explicitly prohibits the creation and distribution of AI-generated sexual images without the consent of the depicted person. The amendment addresses a critical gap in the original 2024 EU AI Act, which established a risk-based classification framework for AI systems but lacked an explicit ban on the act of generating synthetic sexual content.

The implications reach well beyond content moderation. By potentially targeting model providers themselves — not just distribution platforms — the EU is signaling a shift toward upstream AI regulation.

Why This Amendment Matters

The proliferation of high-fidelity image generation models like Stable Diffusion, Flux, and DALL-E has made it technically trivial to create realistic synthetic sexual imagery of real individuals. The common attack vector: open-source LoRA adapters trained on a target person’s photos, plugged into any capable diffusion model. The result is what advocates call “deepfake pornography,” and it has surged in documented cases across Europe.

The EU already had relevant law on the books. The Digital Services Act (DSA) requires platforms to remove such content once reported. But the DSA addresses distribution, not generation. There was no EU-level rule that said: “You may not produce this content in the first place.” The AI Act amendment fills that gap.

EU AI Act was designed on a risk-tiered principle — unacceptable, high, limited, and minimal risk. Non-consensual sexual image generation is now being slotted into the “unacceptable” category, making it a prohibited use case rather than a managed-risk one.

What Compliance Could Look Like for Model Providers

The specific penalty structure and provider obligations are still to be determined in secondary legislation, but the provisional agreement points to two likely compliance pathways for image generation model providers:

Option A: Pre-generation consent verification. Before generating any image of a real person, the system must verify that explicit consent from the depicted individual exists. This is technically complex — it implies identity verification, consent ledgering, and potentially a real-time lookup at inference time.

Option B: Category-level generation refusal. The model or API refuses requests that the system classifies as likely to produce sexual content of real identifiable individuals. This puts the burden on robust content classification and prompt interpretation, which is imperfect at the edges.

Neither path is trivial at scale. Prompt-level intervention is gameable; post-hoc detection catches only what it can recognize; removing the capability from training data affects model versatility. The technical choices each provider makes will likely vary and will generate significant industry commentary.

AI Governance frameworks in other jurisdictions — the UK’s Online Safety Act, Australia’s eSafety Commissioner rules, and Japan’s emerging AI promotion legislation — do not yet have equivalent provisions. The EU is writing what may become the global reference standard.

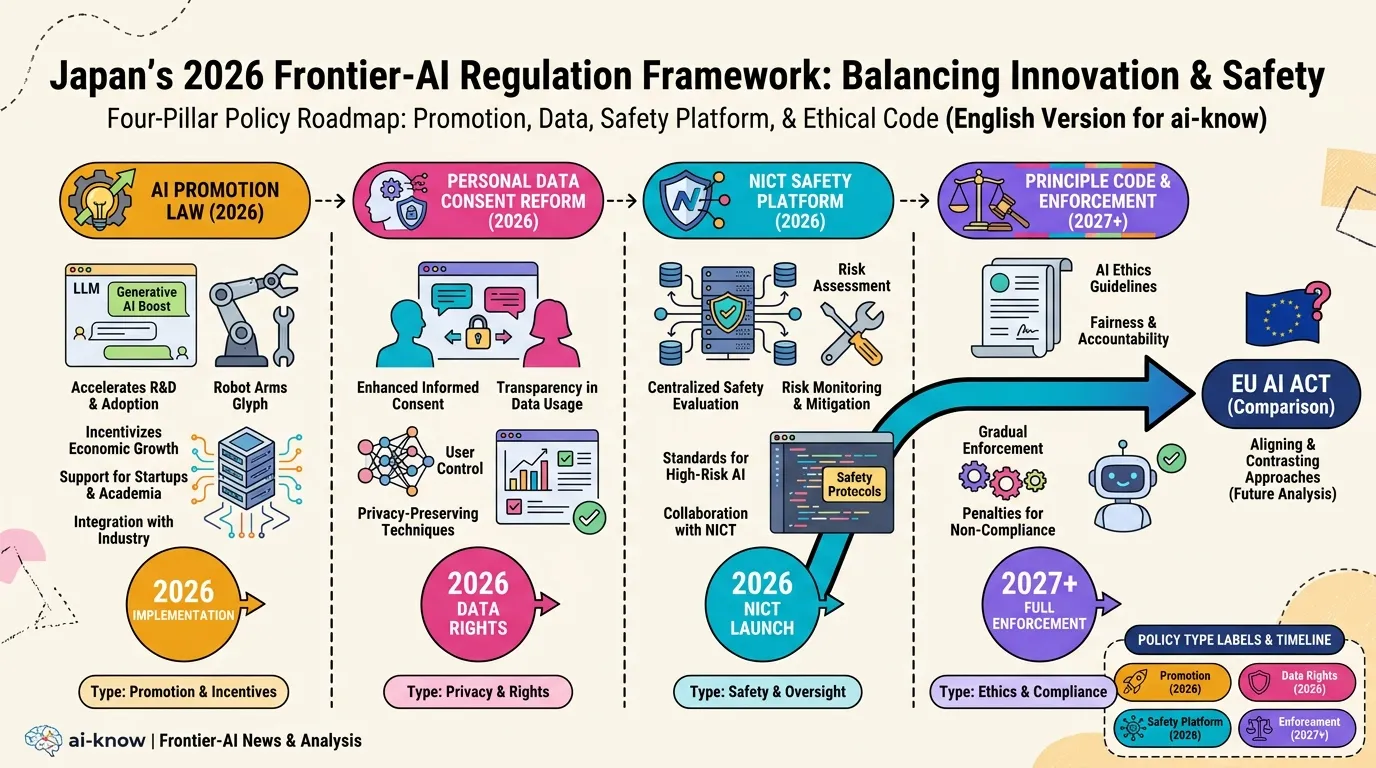

Japan’s Regulatory Trajectory

Japan’s “AI Promotion Law” and Personal Information Protection Act are both under active legislative development in 2026. Japanese policymakers have historically looked to EU frameworks as reference models, and the EU AI Act amendment is likely to accelerate domestic discussion on synthetic media regulation. Unlike the EU, Japan’s current approach is light-touch, relying on voluntary guidelines from the Cabinet Office and Ministry of Economy, Trade and Industry. The EU amendment puts concrete pressure on that posture.

What to Watch

The amendment is provisional — final text requires formal ratification by both Parliament and Council. Key remaining questions:

- Will the obligation fall on model providers, API aggregators, or deployers?

- What constitutes adequate “consent verification” for the purposes of compliance?

- Will open-source models (which cannot enforce inference-time restrictions) be treated differently than API-gated commercial models?

- What are the penalty thresholds and enforcement mechanisms?

Answers will emerge through the secondary legislation process over the coming months. Organizations building or distributing image generation products in the EU should begin compliance scoping now.

Related Articles

Japan AI Policy 2026: Promotion Law, Data Consent Reform, and the NICT Safety Platform

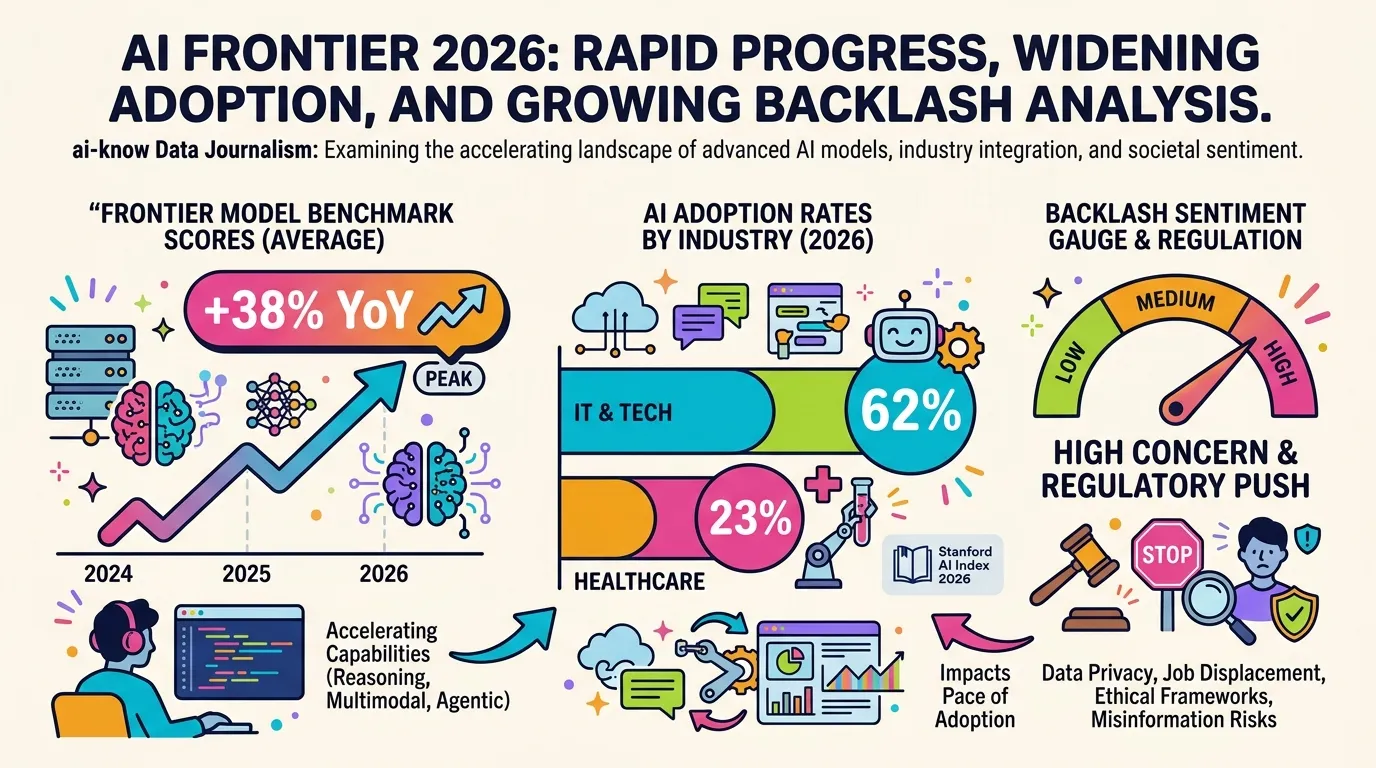

10 Charts That Explain AI in 2026: Progress, Adoption Gaps, and Backlash

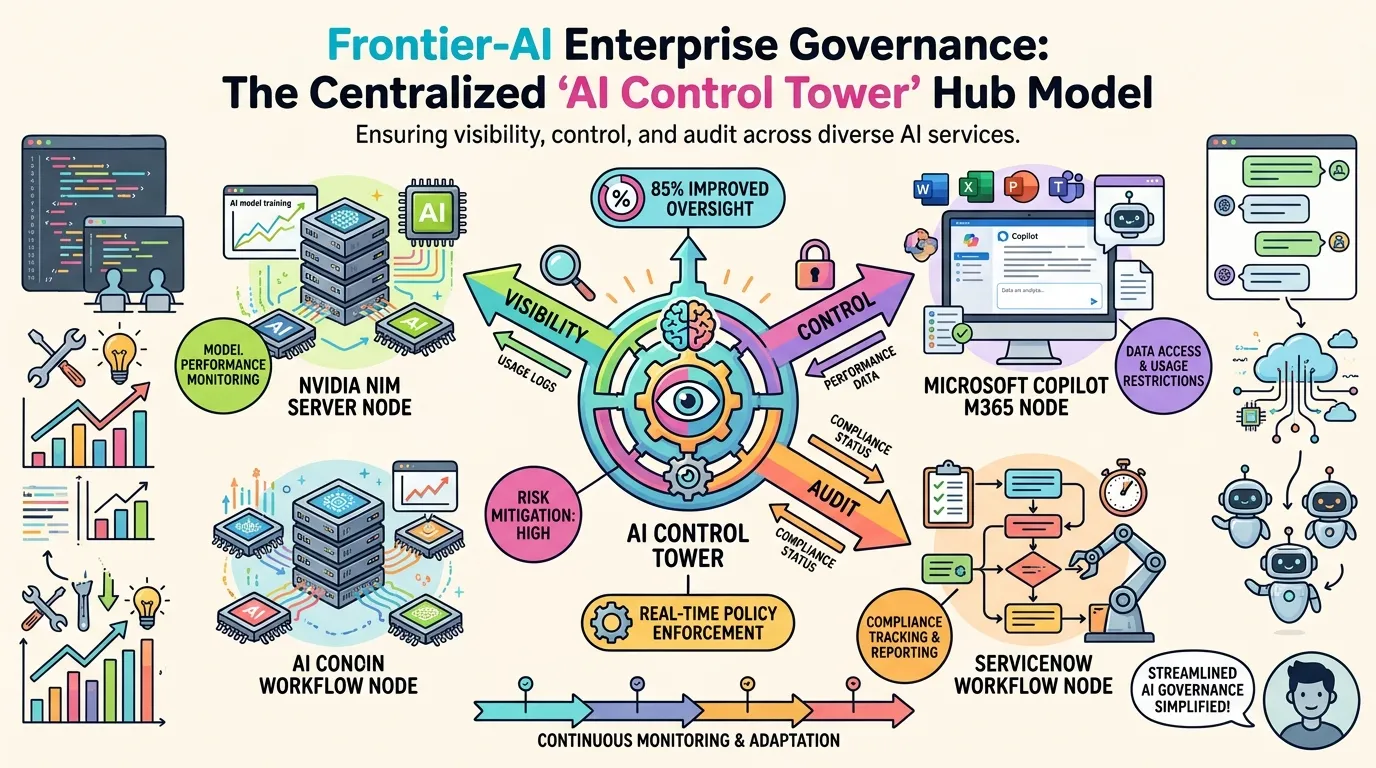

ServiceNow's AI Control Tower: Agentic AI Governance from Desktops to Data Centers

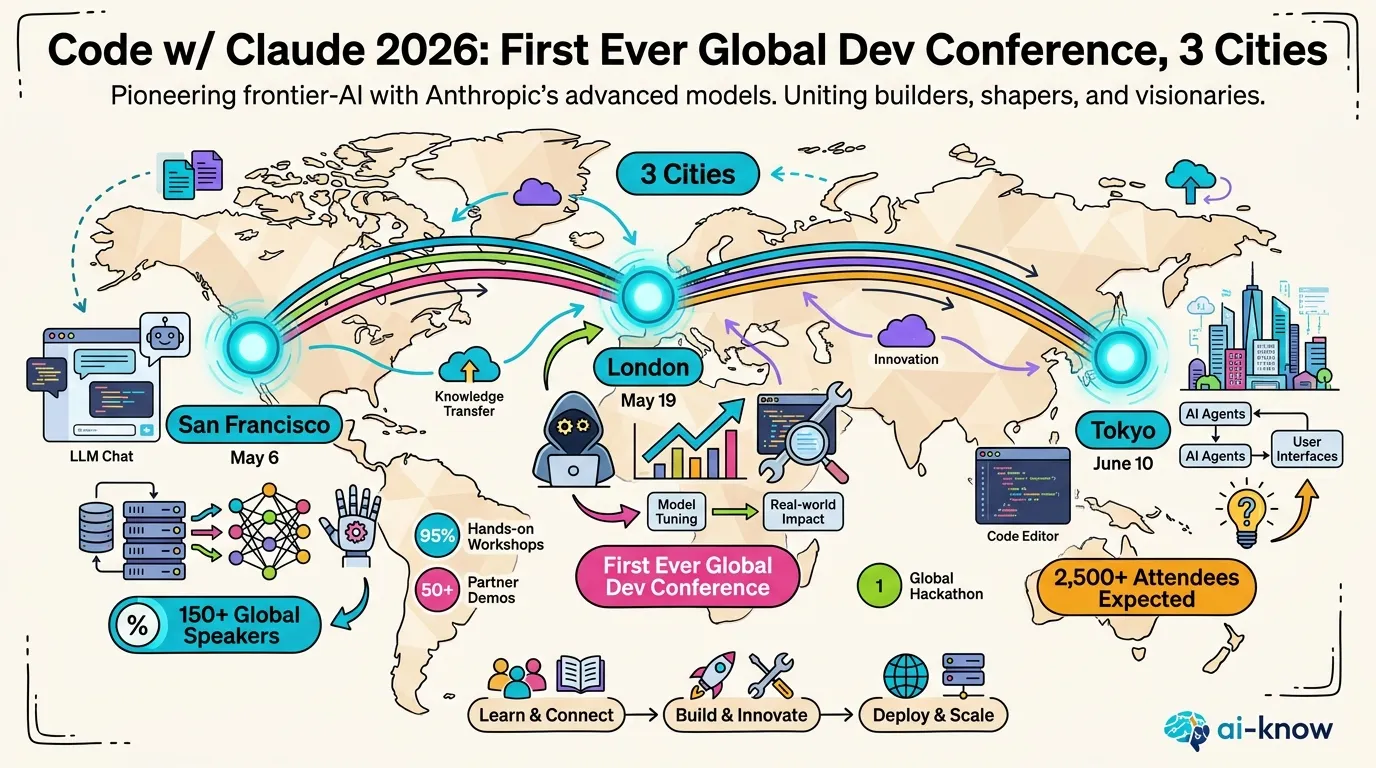

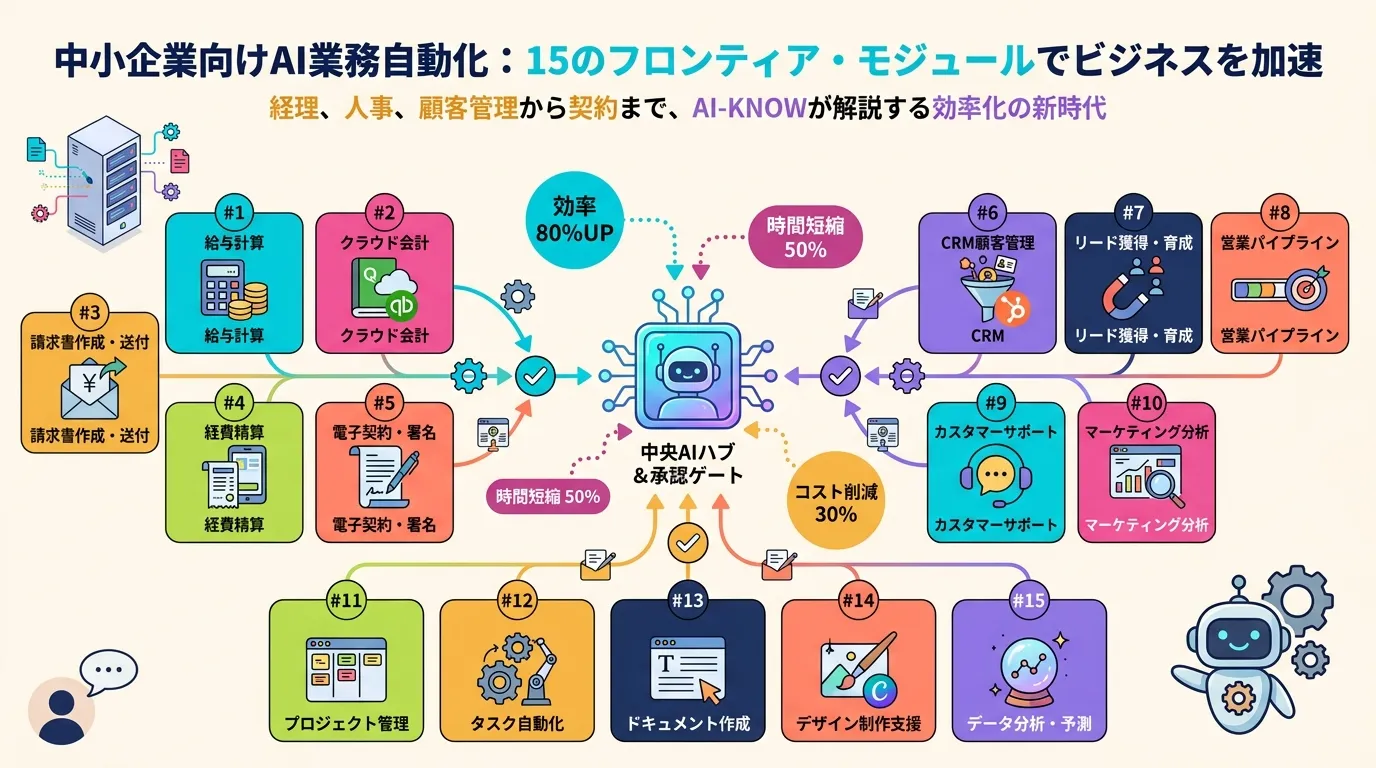

Anthropic Launches Claude for Small Business: 15 Agentic Workflows to Close the SMB AI Gap

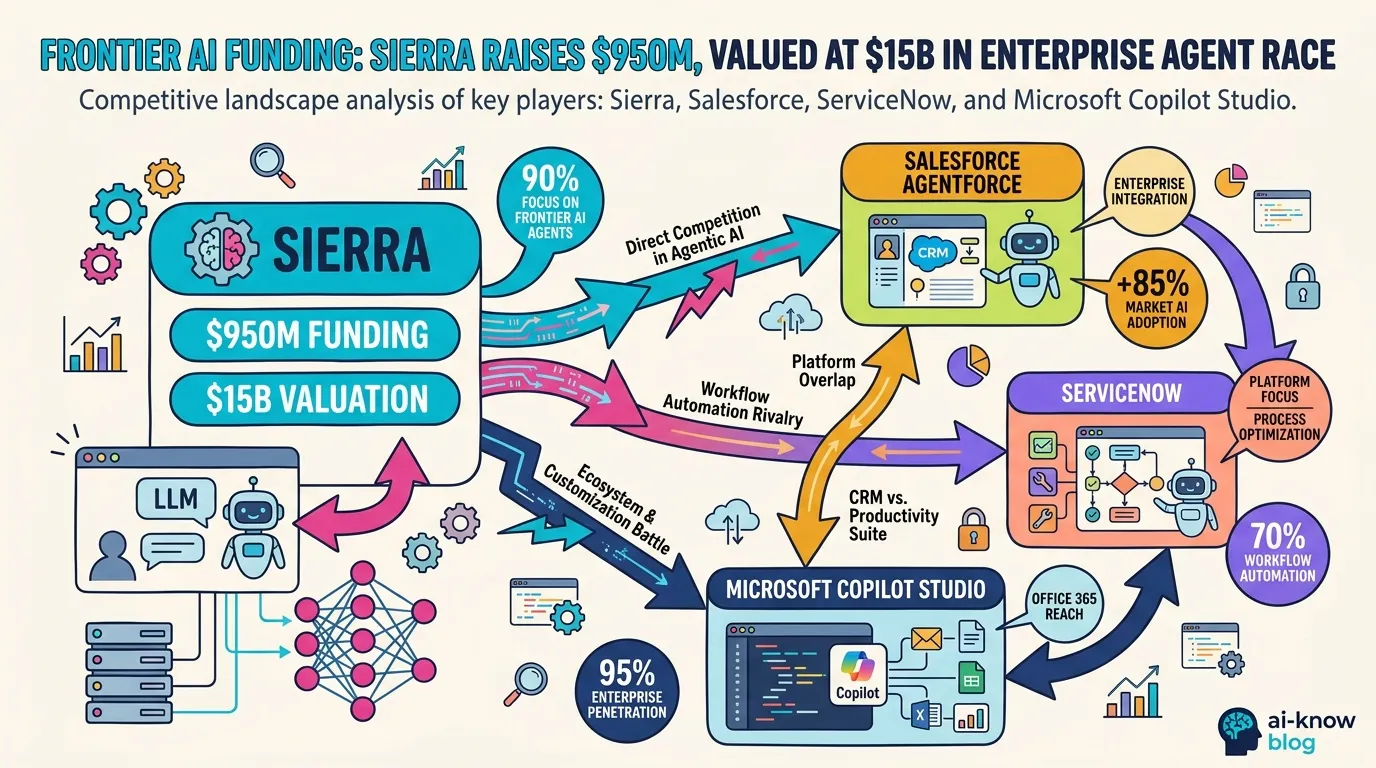

Sierra's $950M Raise Signals the Enterprise AI Consolidation War