AI Slop Floods HackerOne: How LLMs Are Breaking Bug Bounty Programs

HackerOne's temporary intake freeze and Google's new policy reveal a structural problem — LLMs make it cheap to submit, but expensive to triage.

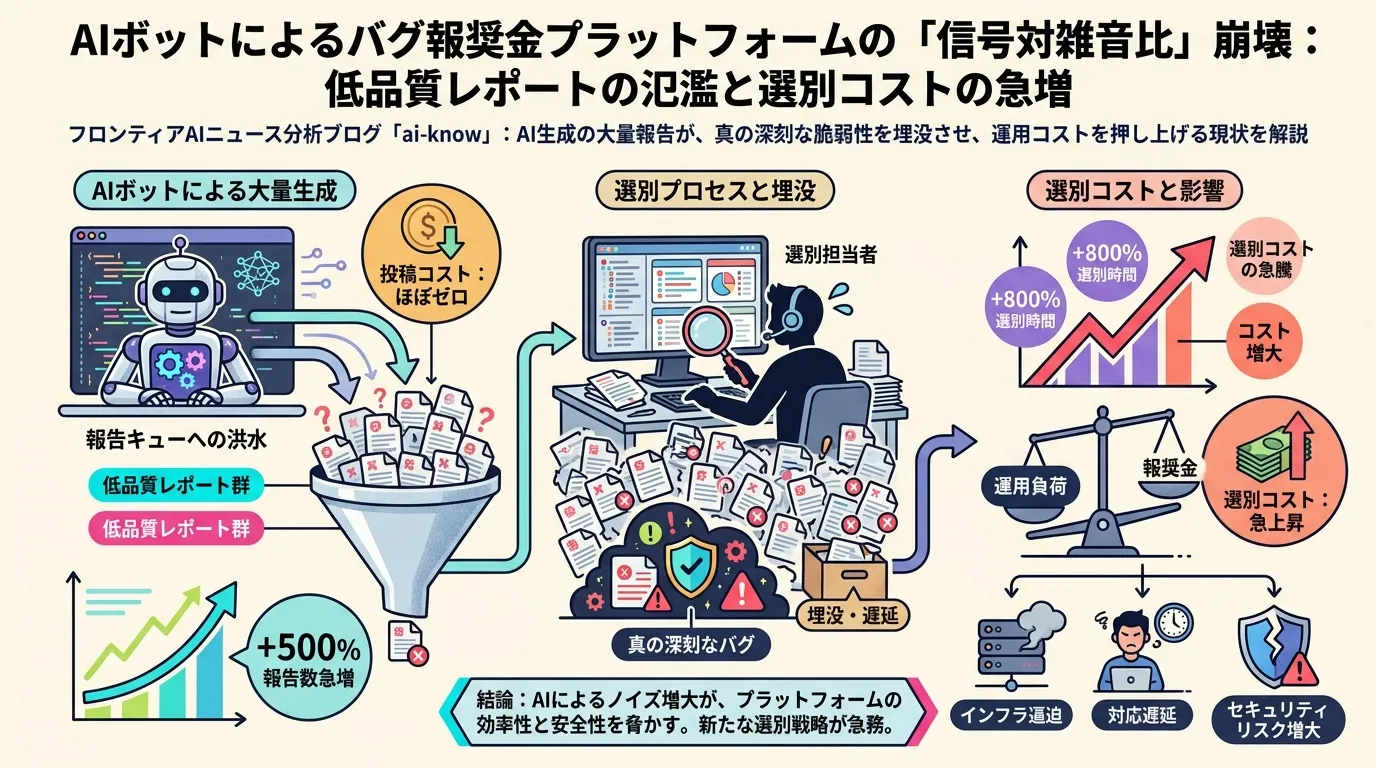

On March 28, 2026, HackerOne — the world’s largest vulnerability disclosure platform — temporarily halted new report intake. The reason: a surge of AI Slop submissions, AI-generated vulnerability reports that look plausible but contain hallucinated details, non-reproducible exploit paths, or outright fabricated CVE references. What had been a manageable noise problem became a triage crisis.

What Happened

Security firm Minimus tracked the upstream impact: across fifteen bug bounty report categories they monitor, five saw incoming volume fall by more than 50% after HackerOne’s freeze — a sign that a large fraction of pre-freeze submissions had been AI-generated and that real researchers had pulled back while the platform stabilised.

LLM Output Quality is at the core of the problem. Large language models are capable of generating technically convincing prose about security vulnerabilities — correctly identifying vulnerability classes, referencing real CVEs, describing exploit primitives. But they frequently hallucinate the specifics that matter most: the exact code path, the version range, the preconditions, the proof-of-concept steps. A human reviewer cannot tell from the report text alone whether the exploit is real; they have to attempt to reproduce it. That process is expensive.

The economics of Bug Bounty programs depend on a critical assumption: that submitters have skin in the game. Researchers spend hours finding and documenting a vulnerability because a valid report earns a payout. AI collapses that cost structure. The marginal cost of generating a plausible-looking vulnerability report with a LLM approaches zero, flooding platforms with submissions that impose real triage costs on the receiving end.

Google’s Policy Shift

Google’s response to the same dynamic is instructive. The company updated its OSS vulnerability reporting program to exclude AI-automatically-generated reports and to require that submitters demonstrate reproducibility and substantive impact. Rather than banning AI assistance outright — which would be unenforceable and counterproductive — Google shifted the burden of proof. If you used AI to find or draft your report, you are still responsible for validating it before submission.

This is a meaningful incentive redesign. It preserves the legitimate use of AI for vulnerability research (assistive tools like CodeQL, AI-powered static analysis, and now LLM-assisted code review can genuinely accelerate finding real bugs) while removing the perverse incentive to submit unvalidated AI output and hope for a lucky hit.

The Broader Pattern: AI Misuse and Signal Collapse

HackerOne’s crisis is not an isolated incident. The same dynamic — AI reduces submission cost, human triage cost stays constant, signal-to-noise ratio collapses — has played out across multiple domains in 2025-2026:

- arxiv preprint moderation (2025): AI-generated papers with plausible-sounding abstracts overwhelmed volunteer moderators.

- Stack Overflow (Q1 2026): AI-generated answers at scale reduced answer quality enough that active contributors began leaving.

- Stanford Web Content Study (May 2026): Estimated 35% of indexed web content is now AI-generated, with quality declining in long-tail domains.

What these events share is a structural asymmetry: generating content with AI is cheap; evaluating content for quality requires human judgment and remains expensive. Any community that relies on volume-based incentives — bug bounties, academic peer review, Q&A platforms, journalism tip lines — faces the same exposure.

Supply Chain Security Implications

For the open source ecosystem, the stakes are high. OSS libraries sit at the base of most enterprise software supply chains. If LLM-generated AI Slop submissions cause real vulnerability reports to be missed, delayed, or buried, the downstream risk propagates to every product built on those libraries.

Anthropic‘s Claude Security beta — which promises to scan entire codebases for vulnerabilities using Claude Opus — is a data point in the opposite direction: AI being used by defenders to find real bugs at scale. The productive question for the security community is not how to ban AI from vulnerability research, but how to design disclosure workflows that preserve the economic incentive to submit validated, reproducible findings while filtering out unvalidated AI output.

What Comes Next

HackerOne restored intake after implementing tighter submission requirements and AI-detection heuristics. Whether those measures scale as generation models improve is unclear. The arms race dynamic — better filters, better generation, better filters — suggests this is a recurring problem, not a solved one.

The more durable solution is Google’s incentive approach: tie rewards to reproducibility, not just to plausibility. Programs that price triage costs into their payout structures — crediting researchers for high signal-to-noise ratios over time, not just for individual valid reports — may prove more robust to AI flooding.

Sources: AI生成の「ゴミ報告」が殺到、対応追い付かず疲弊……脆弱性発見の懸賞金制度に異変 | ITmedia News (2026)

Related Articles

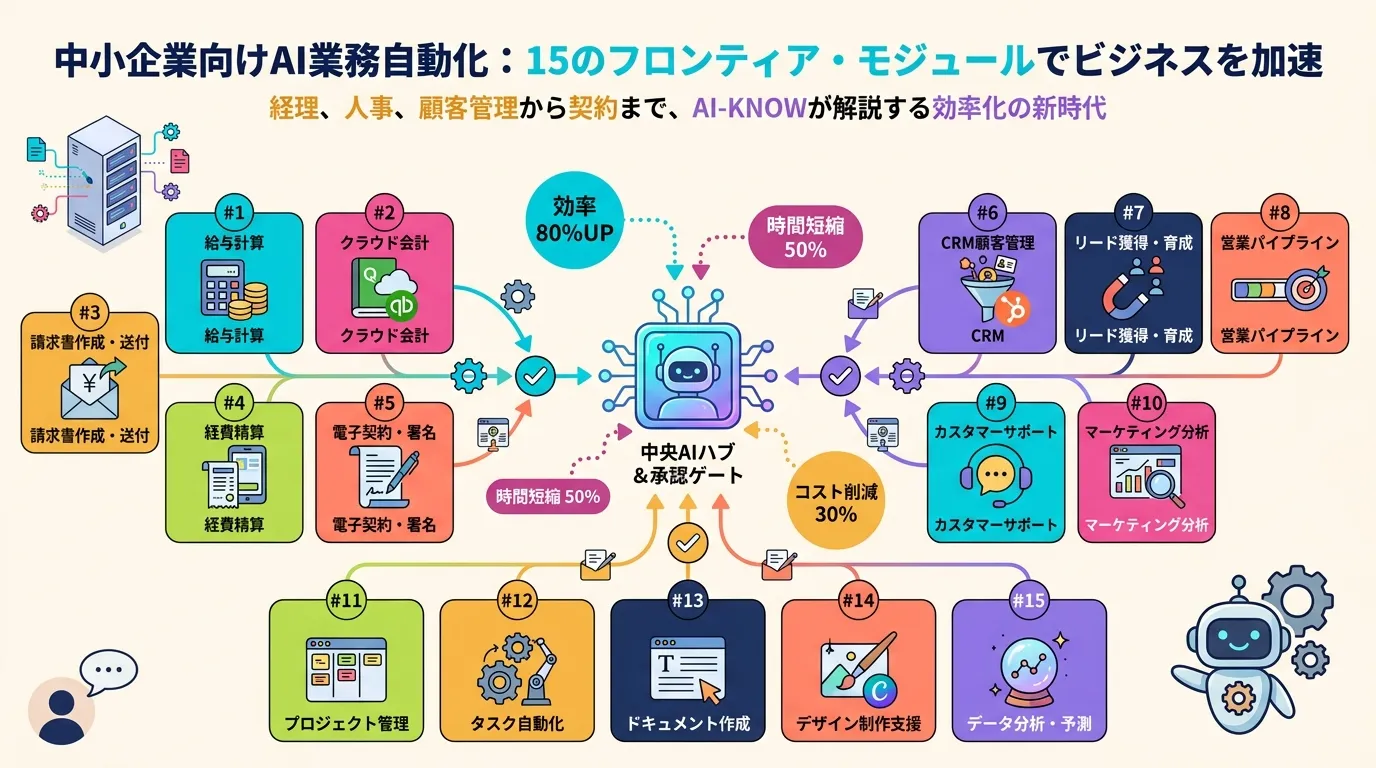

Anthropic Launches Claude for Small Business: 15 Agentic Workflows to Close the SMB AI Gap

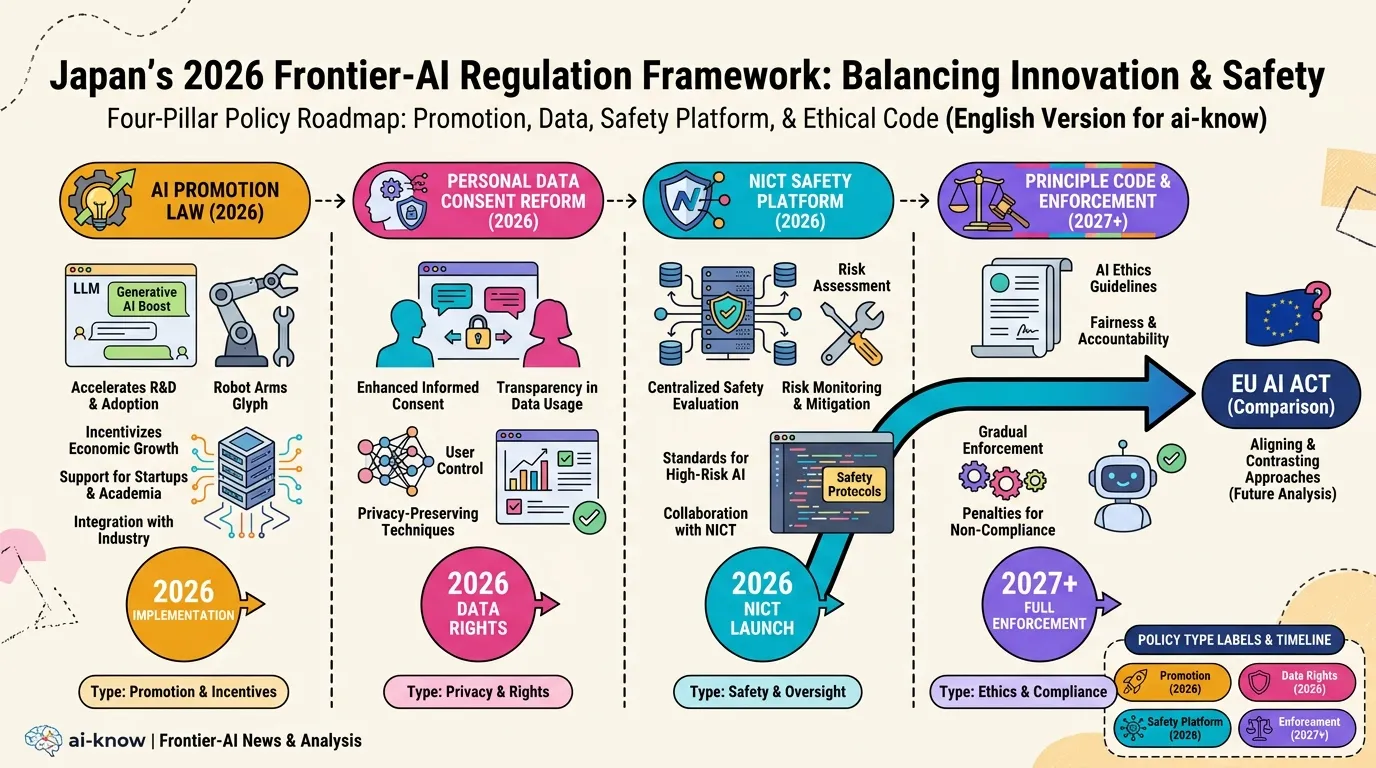

Japan AI Policy 2026: Promotion Law, Data Consent Reform, and the NICT Safety Platform

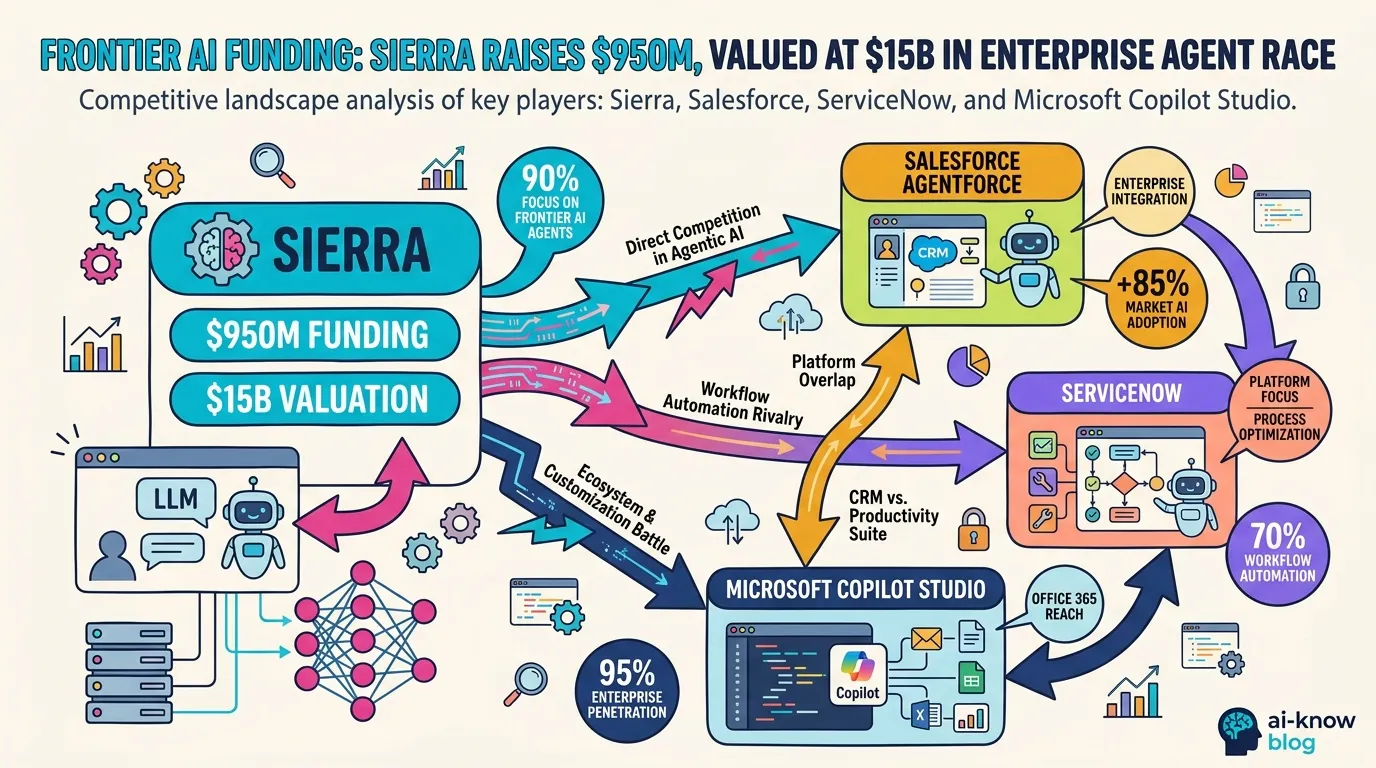

Sierra's $950M Raise Signals the Enterprise AI Consolidation War

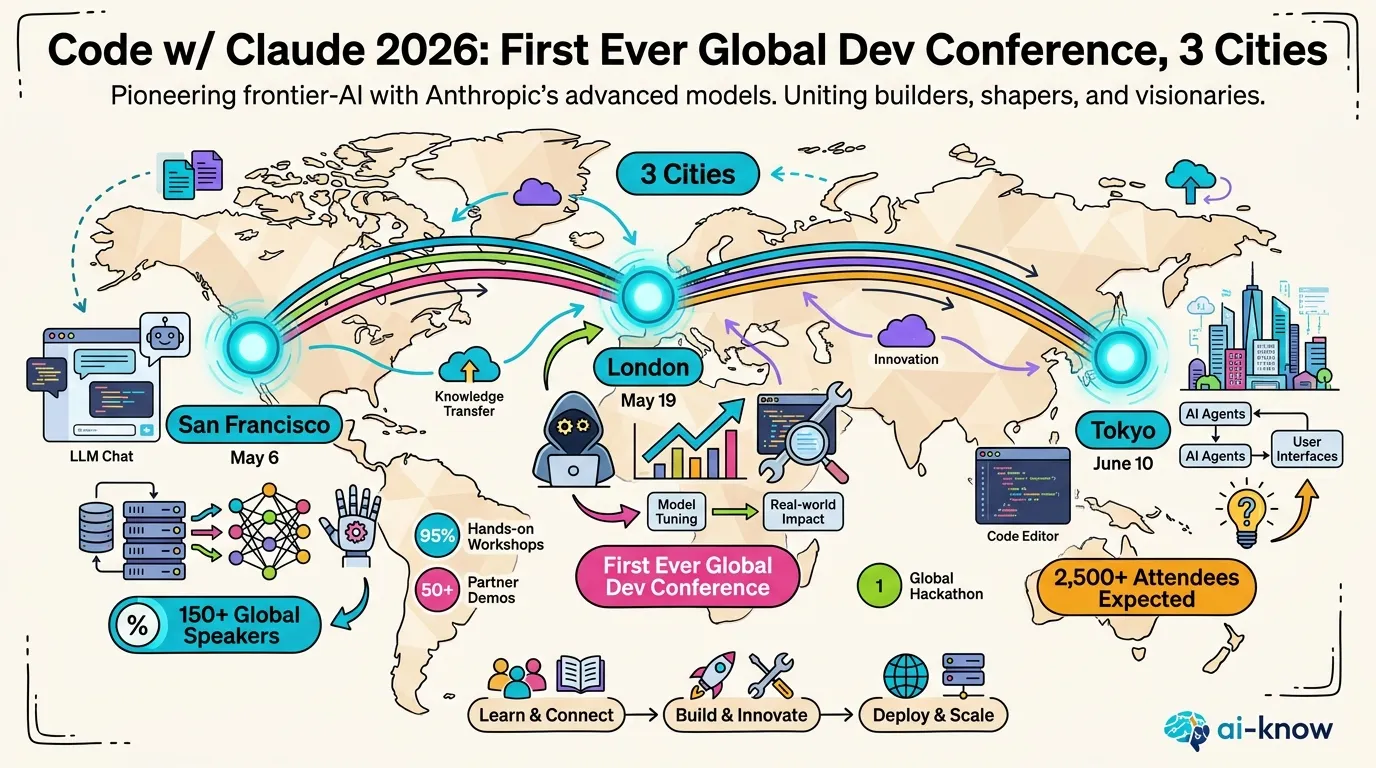

Anthropic Launches "Code w/ Claude 2026" — The Next Chapter of Agentic Coding Begins in SF

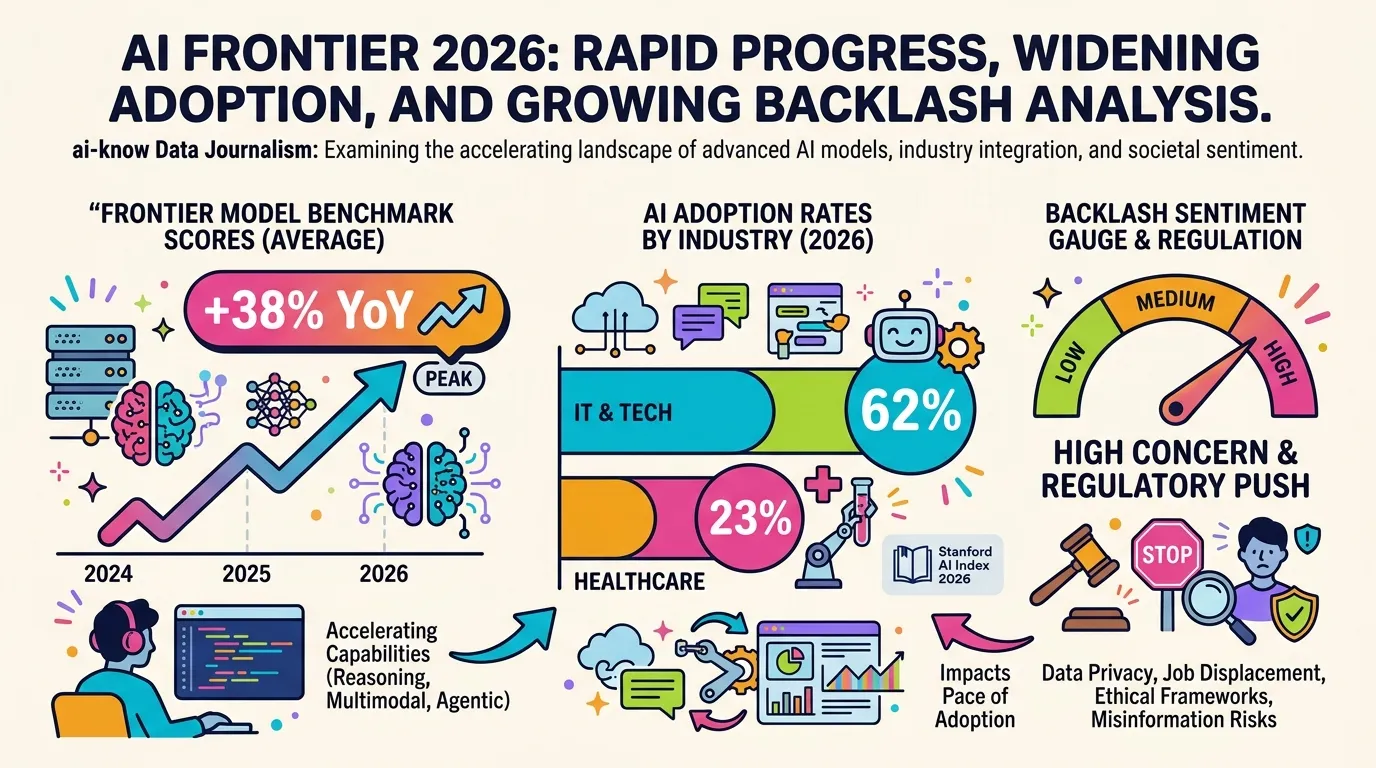

10 Charts That Explain AI in 2026: Progress, Adoption Gaps, and Backlash